From “Does It Work?” to “Does It Work Responsibly?”

Since 2020, Solve has built technical vetting into our selection process for every Global Challenge and select custom challenges. The goal is straightforward: if a team says their breakthrough tech works, we make sure it actually does.

For solutions built on novel technology, that means pressure-testing what’s in the application. Does the tech exist? Does it function as claimed? Are there any red flags? Our vetting now happens through structured interviews that generate rubric-aligned scores and comments, so final-round judges can weigh technical feasibility alongside everything else that matters.

Why we expanded the lens in 2025

Before 2024, we asked technical experts to focus strictly on feasibility and leave ethics to judges. But heading into 2025, one thing became obvious: you can’t separate how technology works from how it impacts people.

Consider a GenAI-powered medical chatbot. If it gives biased advice or leaks patient data, that’s not just an ethics issue—it’s a functional failure. It’s not doing its job safely or accurately. In social-impact tech, ethical breakdowns are product breakdowns.

That’s always been aligned with Solve’s core belief: technology should be mission-centered, not an end in itself. So we don’t just ask, “Does it work?” We ask, “Does it work in a way that advances its social-impact goal responsibly?”

AI has pushed this relationship into sharper focus because of the scale, pace of development, and societal consequences of these systems. Because AI mimics aspects of human cognition, it also prompts more explicitly human ethical questions: is it misleading through hallucinations, biased, or appropriating others’ work?

As a result, we have updated this criterion to technical feasibility and ethics.

In practice, this means assessing:

Whether a solution functions as described

Whether fairness, equity, and risk-mitigation considerations are embedded in its design and use

As before, we only undertake an assessment when the underlying technology is novel or when its use poses material ethical risk.

What our tech experts asked in 2025

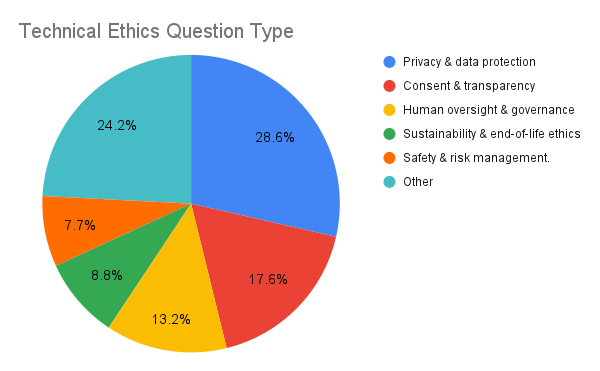

Ahead of vetting interviews in 2025, we asked our technical experts to share the ethics-related questions they planned to raise. We analyzed and coded those questions into themes.

What stood out? A heavy focus on data practices (privacy, consent) and operational safeguards (governance, safety). That’s no surprise since many of today’s social-impact solutions are digital-first and increasingly AI-native, even when hardware is involved.

Here’s the distribution:

We also saw that ethical risk isn’t one-size-fits-all. Certain themes emerged almost exclusively within particular challenge areas, such as child safety in learning and sustainability, and end-of-life considerations in climate.

Join the 2026 tech-vetting pool

A big shoutout to our 2025 tech vetters. You made this year’s selections sharper, fairer, and stronger.

If you have deep technical expertise and want to help evaluate the feasibility and ethics of social-impact technologies, we’d love to have you in the reviewer pool.

Right now, we’re especially looking for experts in climate-related fields—nature-based solutions, materials science, and other hard-tech climate applications.

The future of impact tech depends on rigor. Help us raise the bar.

Tags:

- Innovation

- AI for Good

Related articles

-

Did AI Get It Right?

Reviewing screening outcomes for the 2025 Global Challenges

-

A Visionary Healthcare Innovator: Dr. Mohamed Aburawi on Tech, Healthcare, and Impact Investing

In the newest episode of The Solve Effect, Dr. Mohamed Aburawi shares how building in crisis can spark innovation that lasts.

-

Powered by Purpose: E Ink’s ePaper Technology Takes Aim at the World’s Toughest Problems

Because it draws power only when an image changes—and none at all while static—ePaper reduces energy consumption by orders of magnitude. That single breakthrough unlocks net-zero transit signs, off-grid medical notebooks, and other applications that traditional screens simply can’t power sustainably.